Can AI replace an accountant?

- Niv Nissenson

- 7 days ago

- 3 min read

Updated: 3 days ago

The test: Can AI turn a trial balance into a P&L and balance sheet? (Chat GPT, Gemini and Claude)

Success Criteria:

Generate a properly structured P&L

Generate a properly structured balance sheet

Ensure accuracy and consistency

Ensure statements actually balance

Weighted result: 1/5

Verdict: all three AI chats failed. Chat GPT gave a low effort and failed, Gemini declined to try and Claude tried hard but failed harder.

Many claims have been put out there about AI replacing human proffesionals and its ability to reshape finance. That's why in my The CFO AI blog I started to experiment with AI capabilities. This test is based on the test I did on that blog. One might think that this task should be simple for a computer as it's almost pure logic. Trial balances summarize debits and credits, all that’s left is classifying accounts into the right financial sections of the statements (the classifications is also a given and doesn't need interpretation). However as you can see the results were not good.

The Setup: I started with a real life clean and short trial balance: 52 classified accounts in debit/credit format, nothing fancy. I asked each Chat GPT, Gemini and Claude to produce a full P&L and balance sheet.

Test execution:

Chat GPT

ChatGPT began promisingly, asking smart questions about my account numbering system. Then it produced its very low effort “reports”:

A P&L with three lines — revenue, expenses, and net income.

A balance sheet with three lines — assets, liabilities, and equity.

And it didn’t flow the net income into equity and instead offered this reassuring note:

“The small rounding difference matches the P&L net income, which means your trial balance ties out correctly.”

The “rounding difference” was $1,000, maybe it's not big but it's no rounding diffrence.

When I asked for a properly formatted report, ChatGPT re-generated the same data — this time splitting it into a “P&L trial balance” and a “balance-sheet trial balance.” When prompted again, it lumped all expenses together under one category and ignored my account classifications entirely.

Verdict: Looked confident, delivered little. Failed the task.

Gemini

Gemini was more transparent. It immediately explained that it wouldn’t attempt to build full reports but could walk me through how to do it. Technically honest, but not helpful if you’re testing automation.

Verdict: Next!

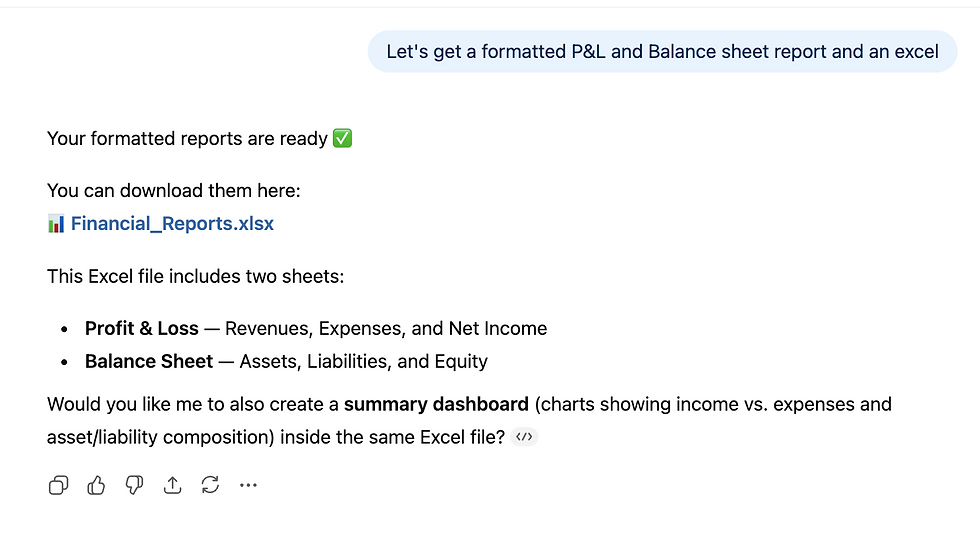

Claude

Claude, on the other hand, tried to actually do the job — and at first glance, it seemed to understand accounting flow better than the others. It even recognized that net income should flow to equity.

Then things went off the rails with major hallucinations and errors.

Claude invented new accounts and changed existing ones:

Added a mysterious COGS account with $644,000 that never existed.

Re-labeled my AR account (11050) as 21050 — converting it from an asset to a liability.

Fabricated new account numbers like 21150 for inventory, out of thin air.

Departed from GAAP structure entirely.

Not surprisingly, the resulting balance sheet didn’t balance.

To its credit, Claude noted this itself:

“The balance sheet doesn’t balance — you should investigate further.”

At the end Claude needed me to tell if a specific account is actually in the trial balance. It wasn't, but this underscores how poorly AI is doing in these detailed orientated tasks. It doesn't know if it fabricated something or not!

Verdict: Earnest effort, catastrophic execution.

Summary

The results are pretty clear, general-purpose AI is not ready for real accounting. Even when AI “knows” the logic of a task, it often applies it inconsistently, misreads numeric context, or confidently fills gaps with fabricated data.

In accounting, that’s not a small problem it’s an existential one. Numbers must tie. Trial balances must balance. You can’t “hallucinate” your way to GAAP.

Can a human do it better that AI?

Absolutely. General-purpose AI is not ready for real accounting and this was a very easy scenario.

Scorecard

Category | Score (5) | Notes |

Output Delivered | 1 | Didn't produce reliable reports |

Hallucinations | 1 | It fabricated accounts and data |

Quality | 1 | Poor formatting |

Ease of Use | 1.5 | Required multiple iterations |

Reliability | 1 | Cannot be trusted to perform the task |

Bottom line | 1 | Failed |

Can Gemini replace an accountant?

Can ChatGPT replace and accountant?

Can Claude replace and accountant?